How we created portraits using a remotely controlled robot

When the first lockdown struck in March 2020, all those headlines about technological advancements, life on Mars, AI, and robotics that were supposed to help us when we needed them most sounded like a fairytale in a stone age…

When the first lockdown struck in March 2020, all those headlines about technological advancements, life on Mars, AI, and robotics that were supposed to help us when we needed them most sounded like a fairytale in a stone age. All we really had were smart vacuum cleaners, Alexas, and iWatches that were no more useful than a nail gun on an inflatable dinghy.

Photography was one of such industries. On the first day of the lockdown, artists and industries that depended on them were caught with their creative pants down. Some experimented with photography over Zoom or FaceTime but many soon learned this technique was as good as a painter moving a canvas against a brush – possible but too limited. Innovators in the big camera companies were silent. Having enjoyed almost 90% drop in camera sales since 2010, the best they could do was release social media hashtags to inspire the remaining 10% to use their cameras more at home.

Here’s a brief history of camera innovations. If in the 1960s Time magazine had asked an England-based photographer to create a portrait of Leonid Brezhnev in the Kremlin, she would have spent several days and thousands of client’s pounds to travel to the location and then back. If in 2022 the same photographer had to create a portrait of the corrupt dictator Putin in his secret £1bn dacha, she would spend several days and thousands of client’s pounds to go there and back. The main difference (other than digital files) is that Putin’s face would have more details on the cover. But the loss of several days and thousands of pounds is still there.

In the past decades, a big change has been to peel the photographer’s eye away from a viewfinder and make them capture images while looking at the picture on the screen. But in 2022, there is no reason why a camera should still be attached to the screen. It’s only natural evolution to hold the screen in your hands while the camera itself is elsewhere completely, perhaps on another continent, roaming freely at your finger’s command, like some computer game character.

In 2022, I should be able to wake up in my bed, book a camera robot on some Uber-like app and have it delivered to Putin’s dacha like some Deliveroo meal 30 min later. With some help from a local photography assistant, Time magazine would have the files for their Worst Dictator of the Century story by the afternoon. In the meantime, I would be on another assignment, connected to a different robot in Japan while having sushi for lunch still in bed. That would free a week of my time, cut unnecessary air travel, the client would thank me for saving £10k of their money, and the headlines about technological advances would match the reality more.

This is not even science fiction. It’s the technology of yesterday. But, as Napoleon Bonaparte allegedly said, if you want something done, do it yourself.

Snofwlake

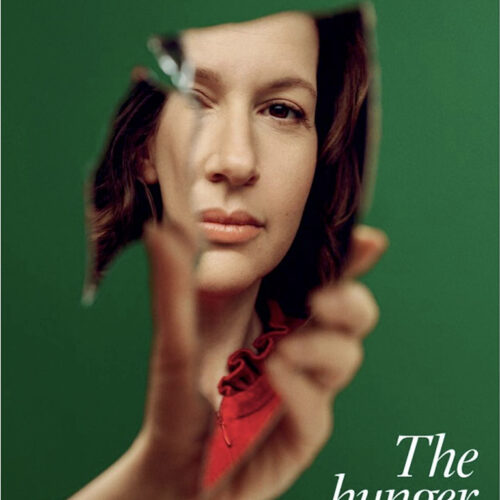

The first step is was to understand if the photographer could use the tool without compromising the creative expression. Photography is often assumed to be an intimate process where the photographer has to be physically present in the same room as the sitter. To show that it’s not the case, we constructed a basic robot photographer called Snowflake.

Snowflake was a simple telepresence robot with a screen, speakers, a webcam with a microphone that enable a two-way communication. Equipped with a mobile camera carrying a 40MP sensor and Leica lens, it was boosted with remote control capabilities to enable full control of both the robot and the camera from anywhere in the world. Sane Seven used it to create the 4th series of The Women in Data portraits celebrating 20 most prominent Women in Data and Technology.

1. What are the challenges of making portraits remotely?

Imagine you have to take a portrait but you can only see where the person was a second or two ago. Saying ‘stop, this pose is perfect’ could mean a very different pose to the one try to capture. Sometimes this happens when you have a poor internet signal at either end and the live camera feed gets delayed. It’s an interesting challenge that doesn’t happen in conventional portrait settings.

Also, the live camera feed is displayed on a small screen (7cmx15cm) which makes it difficult to confirm the focus or even know where the person is looking. It’s not the same as using a mirrorless camera screen where you can confirm what you see by looking at the scene past the screen. It’s more like driving a rover on the Moon – all discoveries happen on the screen. But all these problems were created by the technical limitations of the present project, not the concept of remote photography in general.

This robot was just a low-budget proof of concept. It was like a disposable film camera in terms of its abilities and functionality but we still managed to create some simple but relatively decent portraits. It was very stressful because the sitters were high profile women and we ran into all possible problems that ranged from overheating systems and huge signal delays to the robot losing its balance on carpets or its screen falling off during transportation.

But, if the robot was well built, we feel it would be a natural extension of the photographer’s moves, allowing to retain the same or similar artistic style. It is still the same photography tool, even if it’s detached from the screen and separated from it by 1000 miles.

2. How do you handle things like lighting and posing?

We used only natural light in this project. The portraits were created in people’s homes and the goal was to capture the actual atmosphere where people worked during the lockdown. Not all homes had great lighting but we shot many portraits close to the windows or against the windows, which created a nice hazy atmosphere. Beggars couldn’t be choosers. Otherwise, an assistant with lighting would have been there but it was not an option because of the lockdown restrictions.

The only difficulty with posing instructions is that you need to know different body parts very well and have the ability to describe verbally where you would like that body part to be spacially. It’s not just left or right, up or down. It’s full 3d space. Also, it doesn’t help that what’s on the left on the photographer’s screen is on the right from the sitter’s perspective and vice versa. You almost need a new skill for that.

Other than that, the robot has a two-way video and audio feed. We could display posing references to the sitter on the screen. When the robot arrived at their location, it took some time to drive around the room to find the right composition and light for the portrait. Then we invited the sitter, discussed the idea to make sure everyone was comfortable with it, and the rest was two-way poetry until we had something that rhymed, as we like to say.

3. What camera equipment was used?

Full remote control and live camera feed would have required serious upgrades and modifications to the robot if we had used a DSLR or some Mirrorless option. We used a Huawei P20 Pro mobile camera instead. It has one of the best cameras that can shoot raw, has full manual control, and performs well in low light. The phone’s android system allowed full remote control without any additional equipment, so we could both control the camera and see its live feed remotely. It was attached to the robot in a way that allowed tilting up and down but its height on the robot was fixed.

4. Where did you get the robot?

The main component of this contraption was a telepresence robot. We worked with the not-for-profit organization Women in Data, so there was no budget to get something as funky as Atlas from Boston Robotics (https://youtu.be/fn3KWM1kuAw?t=17). Since this was just a proof of concept, we got the cheapest available telepresence robot made in China (in its worst sense). As a telepresence robot, it is so bad that I would rather not advertise the manufacturer but it was similar to OhmniLabs or Double Robotics in terms of its features. We installed the camera and remote control systems ourselves.

5. Do you see this as being a viable option for photography in the future

Surgeons perform the most complex operations using remotely controlled robots. Military drones execute precise military operations controlled remotely. A cafe in Japan uses waiters-robots that are controlled remotely by paralyzed people. It’s not a compromise, it’s an improvement of human abilities.

People often find it difficult to imagine that they need something they haven’t experienced. But assume for a moment that an easy-to-use and fully functional robot exists.

Imagine a well-developed network of such robot cameras. You can book it from anywhere and have it delivered to the desired location within 30min like some Uber Eats meal. It could be accompanied by an assistant who would be available in that area for a fee that would depend on the level of skills. There would be no need to own a camera, it would be a subscription or pay-as-you-go service.

Now imagine a client has a portrait assignment in NY but the photographers they like are in LA. One offers to book a robot that could be on the location in 30min and the results immediately downloadable from the cloud. The other insists on booking the flights, accommodation, travel to the location the next day at the expense of the client arguing that photography relies on building rapport in person. This choice will not be dictated by the photographers but the clients. Eventually, it will be like an argument between someone who insists on hiring a helicopter to photograph a building in person vs someone who insists he/she can shoot the same with a drone.

Before this happens, the first projects will be more like novelty photo shoots. The headlines will read something like ‘famous photographer shoots for Vogue using a robot’ or ‘Six Vanity Fair covers created by the same photographer on 6 continents in one day’.

Eventually, very skilled photographers will emerge who will use robots faster and better than conventional cameras. They will be like the current generation of gamers who are better at driving car in the virtual world than in real life. Artificial Intelligence features will help guide their composition and lighting choices. Communication will be aided by the technologies that will translate between languages in real-time. Also, disabled artists would be able to participate in the industry with equal opportunities.

This is not science fiction. The technology is already available. It’s just a matter of combining it in the right way. Those who do it first may replace the dying camera industry. So I don’t just think the concept is viable – I think it’s inevitable.

The world will soon be connected to the internet via Starlink satellites, cloud computing will soon translate speech between different languages live, making us all speak one language. It’s the future of photography. Sometimes it takes a little pandemic to drive innovation but problems always come with a positive kick in a bum.

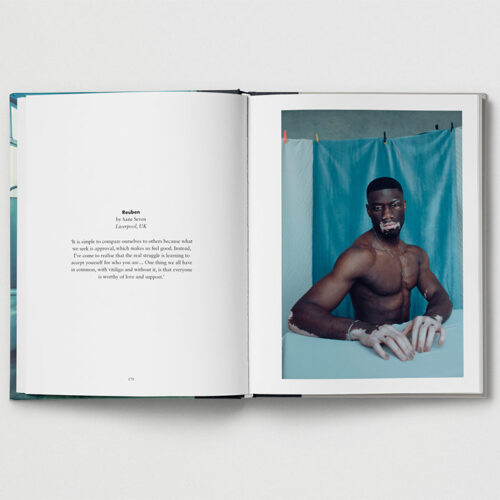

Stories About Women Who Shape Our World

About us